Did you know that small data is essential for business intelligence analysis and reporting? Nowadays, you no longer need to focus on complex data mining to predict AI trends. This is why companies such as Cane Bay Partners are focusing their attention on the scaling of small data to improve their financial services. There are many benefits to taking this approach. These can be summarized as S core benefits of scaling small data. Keep on reading to find out more.

Use Familiar Tools

The fact of the matter is that, as a data scientist or financial technologist, you always want to get the most out of your data. As you know, how much sticking to what you know should benefit you the most. When you use tools that you’re familiar with, it makes scaling your data that much easier. The world of data science is constantly changing. You’re always trying to keep up with the latest trends. If you’re planning on sealing your data, the simplest way to do this is to modernize the tools that you’re using. You should find a way to continue adapting your tools while ensuring that they’re not complicated to meet your scalability requirements.

Incorporate AI Accelerators

The thing is, your computing software needs to be at its utmost performance level. While your standard platform can deliver outstanding performance, giving it a bit of an improvement should be beneficial to you. This is where hardware AI accelerators come in. You should consider using accelerators such as GPU’s to meet your overall performance requirements. These accelerators are essential should you want to focus on software optimization. For instance, if you want to focus your research on big data or machine learning, you can incorporate intel optimization. This can improve your performance by 100x. Moreover, you gain other benefits such as yielding better accuracy in your data collection.

Reuse Your Code

You’d be surprised to find that reusing your code can help you to scale your data more efficiently. In the AI space, things are always changing. Take a look at the increase in algorithms for everything that’s data-related. This is why it’s important for you to eliminate the need to use different codes in your system. The fact is, using different codes doesn’t only use up a lot of your time, but it also requires you to use different tools as well as programming languages. If you want to build an efficient AI platform, you should consider reusing your code to ensure maximum performance.

Use Infinite Cloud Scaling

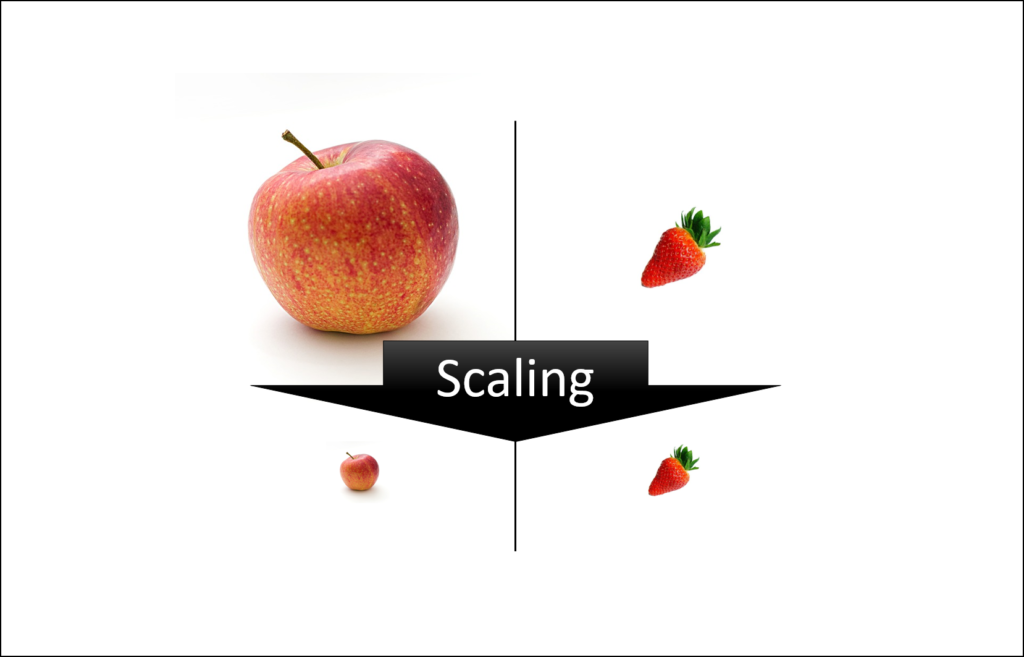

When you’re still in the development stage, it’s easy for you to contain your AI scaling in a simple prototype. However, the moment that you think of expanding your scope, using an infinite cloud should be a great idea. Scaling isn’t as easy as you might think. It’s a complex process that can lead to poor scalability if it’s not undertaken correctly. If you wish to avoid making mistakes by rewriting your code or duplicating data, you should consider scaling up your data on a cloud. If you start your scaling on a small-scale using your laptop, you should consider using open-source tools to seamlessly migrate your data to the cloud.

Use existing Infrastructure

Sometimes, you don’t have to build from scratch. In fact, you can build your AI scaling from existing infrastructure. When you choose this scaling route, you save time and money. The thing is, this is a great way to take advantage of data systems that are already in place. Fortunately, there are reputable systems that ensure that you leverage your existing data efficiently.

In summary, scaling your data requires you to use simple methods. Using simple tools and focusing on what’s already existing as you build should save you time and money.